Job Types

Conscia offers several out of the box Job Types that you can use to instantiate a Job, and optionally, schedule it. Each of these Job Types can be run completely independently of each other, although some have overlapping functionality.

Import Data Files

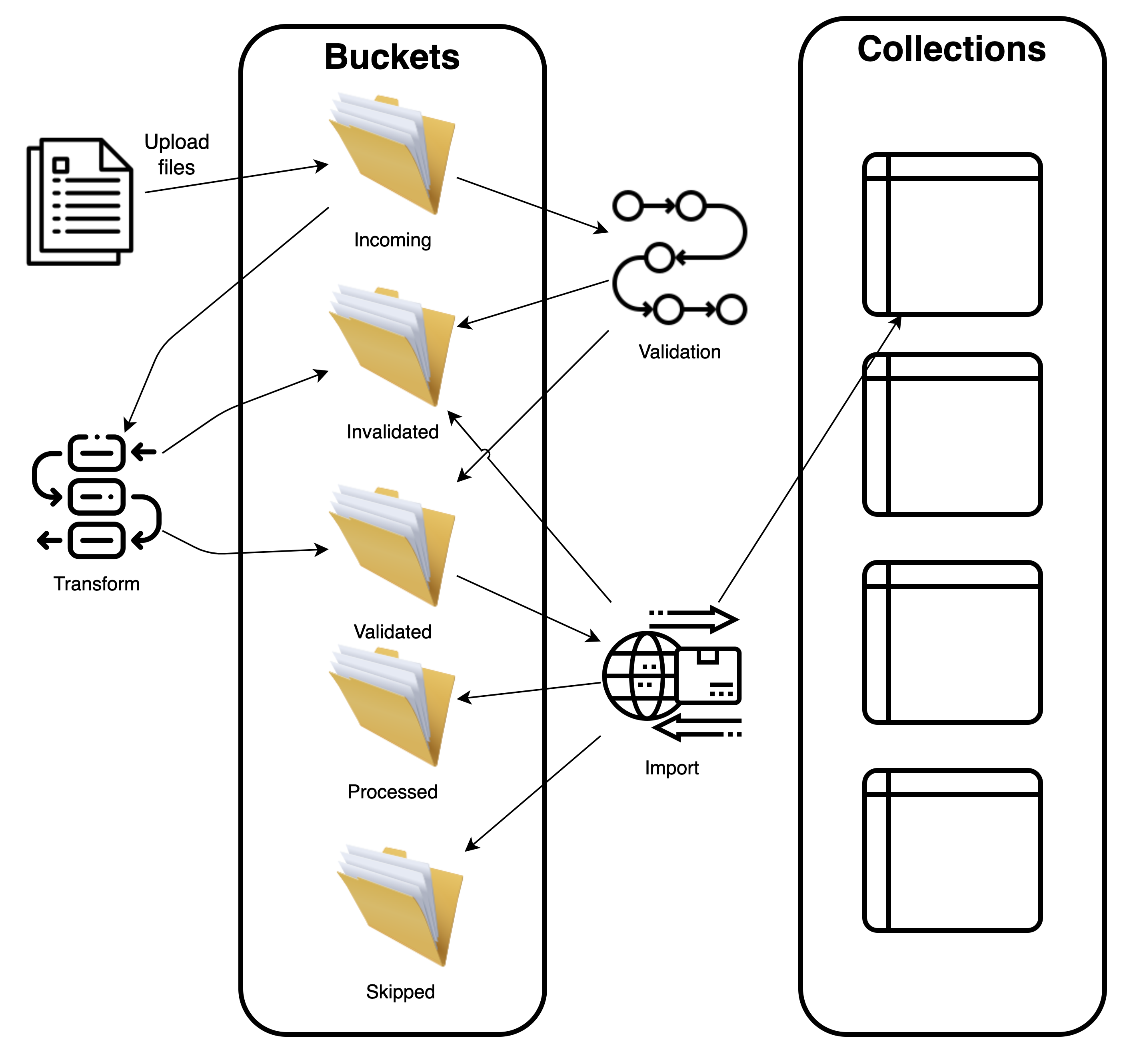

The Import Data Files job validates Data Files (like the Validates Data Files job) and imports them into a Data Collection. Depending on whether the Data Files were successfully imported, they can be moved to different buckets. These buckets are specified in the Job definition. It also provides an option to transform the data before loading it into the Data Collection.

For more information on how to generally work with data files, please see the documentation here.

Input Parameters

| Parameter | Description |

|---|---|

| Incoming Data Bucket | The DX Graph Bucket that contains the Data Files specified by Filename Pattern. |

| Skipped Bucket | A file that has been skipped due to Process Last Matched File is true will be moved here. It will not be validated. |

| Processed Bucket | A file that is fully imported into a collection with no validation errors is moved here. A file that has any validation errors with Skip Invalid Records is true will be moved here along with the corresponding error files. |

| Invalid Data Bucket | This is mandatory if Skip Invalid Records is false. A file that has any validation errors when Skip Invalid Records is false will be moved here along with the corresponding error files. |

| Filename Pattern | Groups files into a set of files to be processed together. e.g. products_*.csv |

| Source Schema | The JSON Schema that must be conformed to. |

| Record Identifier Field | Indicates which field uniquely identifies the records in the Data Files. Validation errors use this to point out erroneous records. |

| Parse Options | Configures how to parse the Source Data Files. e.g. Are the files delimited vs Excel vs JSON format? |

| Collection Code | The Data Collection to import Data Files into |

| Transformers | A list of transformations applied to each validated source record. |

| Target Schema | JSON schema that is applied to the transformed records. Default: uses the schema of specified Collection Code. |

| Skip Invalid Records | Default: false. If false, if any validation errors occurred, no data will be imported. |

| Process Last Matched File | Data Files are scanned in alphabetical order so that you are able to use filenames to sequence the processing sequence. If this parameter is set to true, then only the last matching Data File will be processed and the previous files will be skipped. |

Validate Data Files

The Validate Data File job ensures that a set of Data Files (specified by a filename pattern) is parseable and conforms to a specified schema. Depending on whether the Data Files were successfully validated, they can be moved to a specified data bucket.

Input Parameters

| Parameter | Description |

|---|---|

| Source Data Bucket | The DX Graph Bucket that contains the Data Files specified by Filename Pattern. |

| Validated Data Bucket | If this is specified, successfully validated Data Files are moved to this Bucket. |

| Invalid Data Bucket | If this is specified, unsuccessfully validated Data Files are moved to this Bucket. |

| Filename Pattern | Groups files into a set of files to be processed together. e.g. products_*.csv |

| Source Schema | The JSON Schema that must be conformed to. |

| Record Identifier Field | Indicates which field uniquely identifies the records in the Data Files. Validation errors use this to point out erroneous records. |

| Parse Options | Configures how to parse the Source Data Files. e.g. Are the files delimited vs Excel vs JSON format? |

| Collection Code | The Data Collection to import Data Files into |

| Transformers | A list of transformations applied to each validated source record. |

| Target Schema | JSON schema that is applied to the transformed records. Default: uses the schema of specified Collection Code. |

Transform Data Files

The Transform Data Files job validates Data Files (like the Validates Data Files job) and transforms them.

Input Parameters

| Parameter | Description |

|---|---|

| Source Data Bucket | The DX Graph Bucket that contains the Data Files specified by Filename Pattern. |

| Target Data Bucket | A file with no validation errors is moved here or (a file that has validation errors with Skip Invalid Records is true) will be moved here along with the transformed file and any corresponding error files. The transformed files will be JSONL format and will have the filename: {{sourceFilename}}.YYYYMMDD_HHmmss.transformed.jsonl where YYYYMMDD_HHmmss is the timestamp of when the file was generated. |

| Invalid Data Bucket | This is mandatory if Skip Invalid Records is false. A file that has any validation errors when Skip Invalid Records is false will be moved here along with the corresponding error files. |

| Filename Pattern | Groups files into a set of files to be processed together. e.g. products_*.csv |

| Source Schema | The JSON Schema that must be conformed to. |

| Record Identifier Field | Indicates which field uniquely identifies the records in the Data Files. Validation errors use this to point out erroneous records. |

| Parse Options | Configures how to parse the Source Data Files. e.g. Are the files delimited vs Excel vs JSON format? |

| Transformers | A list of transformations applied to each validated source record. |

| Target Schema | JSON schema that is applied to the transformed records. Default: uses the schema of specified Collection Code. |

| Skip Invalid Records | Default: false. If false, if any validation errors occurred, no data will be imported. |

The following diagram shows how the Data File jobs fit into the overall Data File processing workflow.

Call Webservice Endpoint

This job type is used to call a webservice endpoint. It can be used to call any webservice endpoint, including REST and GraphQL, using any HTTP method (e.g. POST, PATCH, DELETE, PUT, etc.) The job type supports GET, POST, PUT, and DELETE HTTP methods.

Input Parameters

| Parameter | Required | Description |

|---|---|---|

url | Yes | The URL of the webservice endpoint to call. |

method | Yes | The HTTP method to use when calling the webservice endpoint. |

headers | No | The headers to include in the request. |

body | No | The body to include in the request. |

searchParams | No | The query parameters to include in the request. |

Call DX Engine

| Job Type Code | Description |

|---|---|

callDxEngine | This job type is used to call DX Engine. |

Input Parameters

| Parameter | Required | Description |

|---|---|---|

templateCode | Yes | The DX Engine Template to invoke. |

context | No | The context to include in the request. |

Process File With Webservice Endpoint

| Job Type Code | Description |

|---|---|

processFileWithWebserviceEndpoint | The Job Type Process File With Webservice Endpoint is used to process a file and send the records to a webservice endpoint. The file can be any delimited file and JSON (array or newline-delimited). The job type supports sending batches of records to the webservice endpoint. |

Input Parameters

| Parameter | Required | Description |

|---|---|---|

| dataBucketCode | Yes | The data bucket code containing the file to process. |

| filename | Yes | The file that contains the records to process. This can be any delimited file and JSON (array or newline-delimited) |

| batchSize | No | The batch size to use. Defaults to 1. A batch of 3 will send an array of 3 JSON records to the webservice. |

| webserviceEndpoint | Yes | The webservice endpoint to call. This is an object. All of the webservice properties can contain a JavaScript expression (within backticks) that have reference to a variable called records which is the array of of records in the file. |

| webserviceEndpoint.url | Yes | The URL of the webservice endpoint to call. |

| webserviceEndpoint.method | Yes | The HTTP method to use when calling the webservice endpoint. |

| webserviceEndpoint.headers | No | The headers to include in the request. |

| webserviceEndpoint.body | No | The body to include in the request. |

| webserviceEndpoint.searchParams | No | The query parameters to include in the request. |

Example:

Take the following CSV file: people.csv

name,email

John Doe,john@email.com

Jane Doe,jane@email.com

Jim Doe,jim@example.com

Jill Doe,jill@example.com

The following parameters will send batches of two JSON records to a webservice endpoint.

{

"dataBucketCode": "my-data-bucket",

"filename": "people.csv",

"batchSize": 2,

"webserviceEndpoint": {

"url": "https://my-webservice.com/api/v1/records",

"method": "`records[0]method`",

"headers": {

"Content-Type": "application/json"

},

"body": {

"data" : "`records`",

"length": "`records.length`"

}

}

}

The body of each request will be:

{

"data": [

{ "name": "John Doe", "email": "john@email.com" },

{ "name": "Jane Doe", "email": "jane@email.com" }

],

"length": 2

}

followed by:

{

"data": [

{ "name": "Jim Doe", "email": "jim@example.com" },

{ "name": "Jill Doe", "email": "jill@example.com" }

]

"length": 2

}

Process File With DX Engine

| Job Type Code | Description |

|---|---|

processFileWithDxEngine | The Job Type Process File With DX Engine is used to process a file and send the records to a DX Engine. The file can be any delimited file and JSON (array or newline-delimited). The job type supports sending batches of records to the DX Engine. |

Input Parameters

| Parameter | Required | Description |

|---|---|---|

| dataBucketCode | Yes | The data bucket code containing the file to process. |

| filename | Yes | The file that contains the records to process. This can be any delimited file and JSON (array or newline-delimited) |

| batchSize | No | The batch size to use. Defaults to 1. A batch of 3 will send an array of 3 JSON records to the webservice. |

| environmentCode | Yes | The DX Engine environment code to use. |

| token | Yes | The DX Engine token to use. |

| templateCode | Yes | The DX Engine template to invoke. This can contain a JavaScript expression (within backticks) that has a reference to a variable called records which is the array of of records in the file. |

| context | No | It defaults to {}. The context to include in the request. |

Process Collection With Webservice Endpoint

| Job Type Code | Description |

|---|---|

processCollectionWithWebserviceEndpoint | The Job Type Process Collection With Webservice Endpoint is used to process a DX Graph Collection and send the records to a webservice endpoint. The job type supports sending batches of records to a webservice endpoint. |

Input Parameters

| Parameter | Required | Description |

|---|---|---|

| collectionCode | Yes | The data bucket code containing the file to process. |

| filter | No | The filter to apply to the collection. |

| batchSize | No | The batch size to use. Defaults to 1. A batch of 3 will send an array of 3 JSON records to the webservice. |

| webserviceEndpoint | Yes | The webservice endpoint to call. This is an object. All of the webservice properties can contain a JavaScript expression (within backticks) that have reference to a variable called records which is the array of of records in the file. |

| webserviceEndpoint.url | Yes | The URL of the webservice endpoint to call. |

| webserviceEndpoint.method | Yes | The HTTP method to use when calling the webservice endpoint. |

| webserviceEndpoint.headers | No | The headers to include in the request. |

| webserviceEndpoint.body | No | The body to include in the request. |

| webserviceEndpoint.searchParams | No | The query parameters to include in the request. |

Process Collection With DX Engine

| Job Type Code | Description |

|---|---|

processCollectionWithDxEngine | The Job Type Process Collection With DX Engine is used to process a DX Graph Collection and send the records to a DX Engine. The job type supports sending batches of records to the DX Engine. |

Input Parameters

| Parameter | Required | Description |

|---|---|---|

| collectionCode | Yes | The data bucket code containing the file to process. |

| filter | No | The filter to apply to the collection. |

| batchSize | No | The batch size to use. Defaults to 1. A batch of 3 will send an array of 3 JSON records to the webservice. |

| environmentCode | Yes | The DX Engine environment code to use. |

| token | Yes | The DX Engine token to use. |

| templateCode | Yes | The DX Engine template to invoke. This can contain a JavaScript expression (within backticks) that has a reference to a variable called records which is the array of batched records (based on batchSize) in the collection. |

| context | No | It defaults to {}. The context to include in the DX Engine request. This can contain a JavaScript expression (within backticks) that has a reference to a variable called records which is the array of batched records (based on batchSize) in the collection. |

Download Data From Webservice

This Job Type is covered here.